When Volume Fits but Execution Fails: The Hidden Risk of Unverified Product Data

You proceed to open and examine the routing dashboard on an otherwise unremarkable Tuesday morning. The computational solver generates and outputs a ninety-two percent volumetric fill rate. The configuration appears completely pristine on the glass monitor. You initiate the dispatch workflow. Approximately three hours later, the yard supervisor proceeds to contact your primary communication line. The transport trailer remains substantially half-empty. Why. The physical footprint of the initial pallet stack exceeds the loading dock clearance parameters by precisely forty millimeters because the central data registry internally recorded box cavity dimensions while the warehouse personnel actively measure reinforced external crate boundaries. Alternatively, perhaps the spatial optimization routine treated a corrugated electronics packaging unit as a solid structural concrete block, thereby causing the intermediate stacking tier to collapse during a sudden deceleration event. The mathematical calculation sequence executed flawlessly. It merely operated entirely against fabricated input parameters.

Why We Chronically Underestimate the Failure Points

We consistently suffer from a persistent fill-rate bias throughout modern logistics planning workflows. The cognitive shortcut quietly whispers to planners that if the utilization percentage steadily climbs toward absolute theoretical maximums, the three-dimensional packing heuristic must have automatically compensated for any minor specification drifts. That mental model shatters immediately under physical reality constraints. You simply cannot reasonably expect a spatial arrangement algorithm to silently correct accidentally swapped weight units or actively compensate for deliberately ignored vertical stacking tolerances. When you proceed to feed it completely unverified dimensional strings, the solver merely engages in arranging those false numerical coordinates into mathematically tight geometric configurations. The center-of-gravity calculation shifts dangerously toward the front axle. The weight distribution profile skews well beyond legally permissible highway transportation thresholds. Dock operators inevitably end up performing expensive and time-consuming manual cargo rework because the digital planning layer blindly assumed a flexible-bottom cardboard carton possessed the exact compressive rigidity of machined industrial steel. The operational cascade originates precisely at the data ingestion layer. It absolutely does not begin during the calculation phase.

Key Operations and Their Underlying Physical Importance

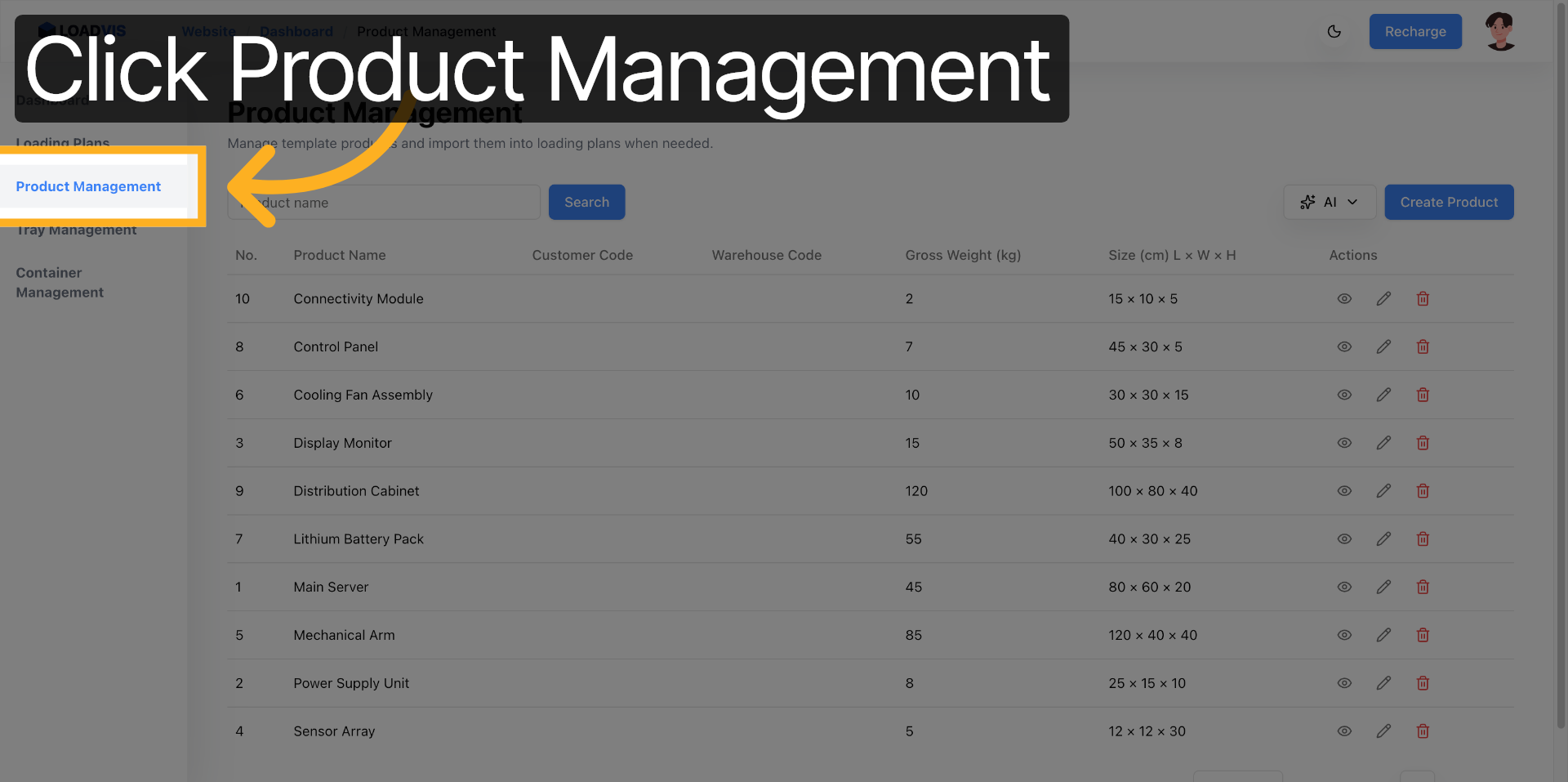

You should closely examine exactly how the platform proceeds to ingest these raw material specifications. We do not concern ourselves with the superficial mechanics of user interface interactions. We focus entirely on the physical reasoning behind why each configuration sequence matters.

First, you carry out the execution of automated AI text parsing against highly unstructured supplier email communications. The recognition engine actively normalizes linguistic variations and systematically extracts length-by-width-by-height string values, yet it completely lacks the optical sensors required to physically validate whether those extracted numbers describe internal void space or heavily taped external packaging boundaries. You must subsequently engage in the manual verification process for gross weight entries before you allow them to permanently enter the system database. A single misaligned decimal point fundamentally alters the gravitational distribution profile across the entire trailer cargo bay.

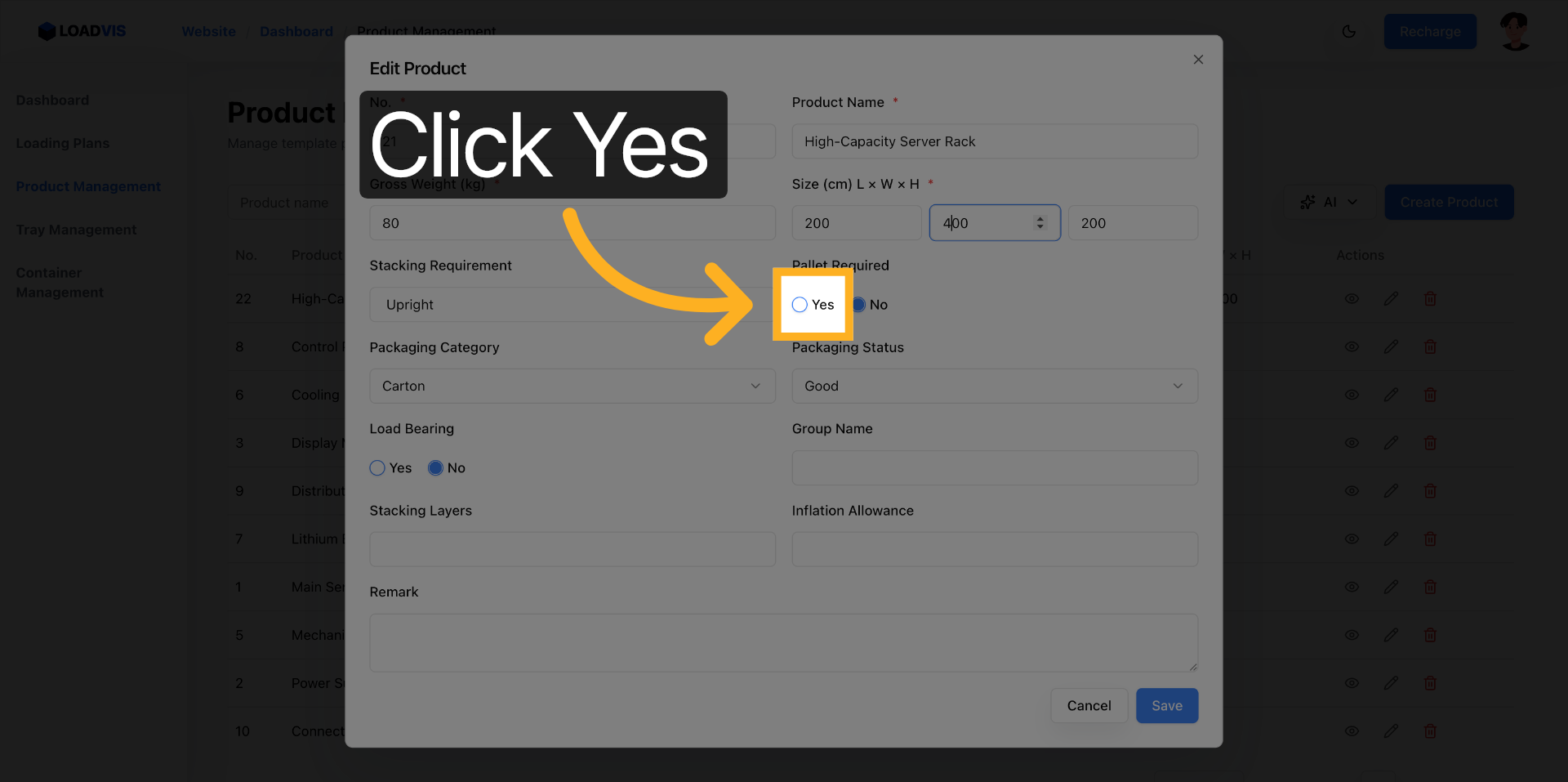

When you proceed to activate the pallet requirement toggle during the parameter editing workflow, the software architecture initiates a complete restructuring of the spatial algorithm’s foundational calculation layer. It deliberately reserves critical vertical clearance for standard wooden deck boards and recalibrates the two-dimensional footprint constraints to properly account for forklift tine insertion gaps.

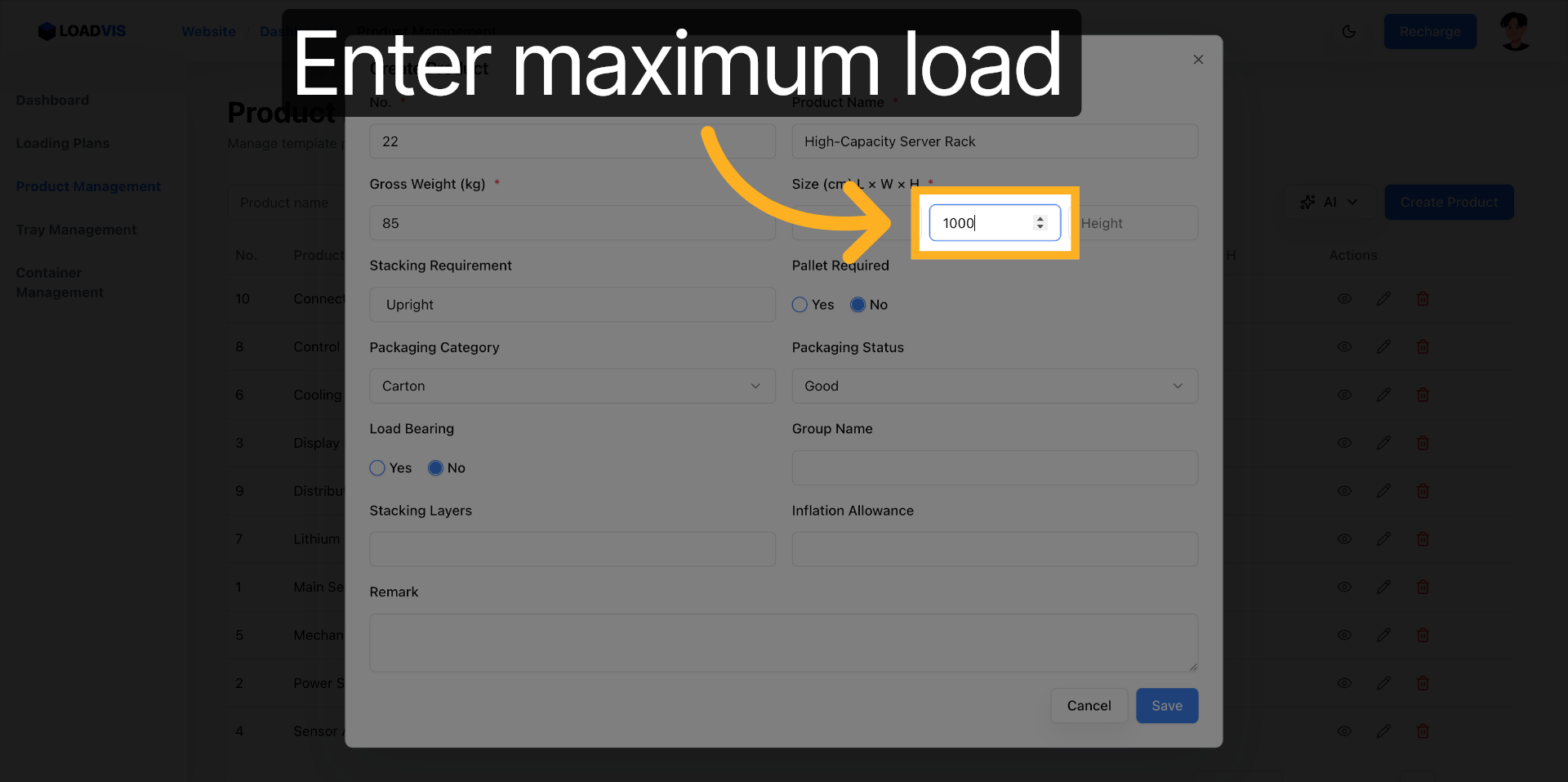

Defining both the maximum and minimum load capacity parameters establishes absolutely essential compression boundaries. The upper numerical threshold actively prevents bottom-layer crate crushing under accumulated stacked mass. The lower numerical boundary deliberately ensures that the optimization routine does not generate structurally unstable floating voids where heavy mechanical components could experience uncontrolled lateral shifting during high-speed interstate transit.

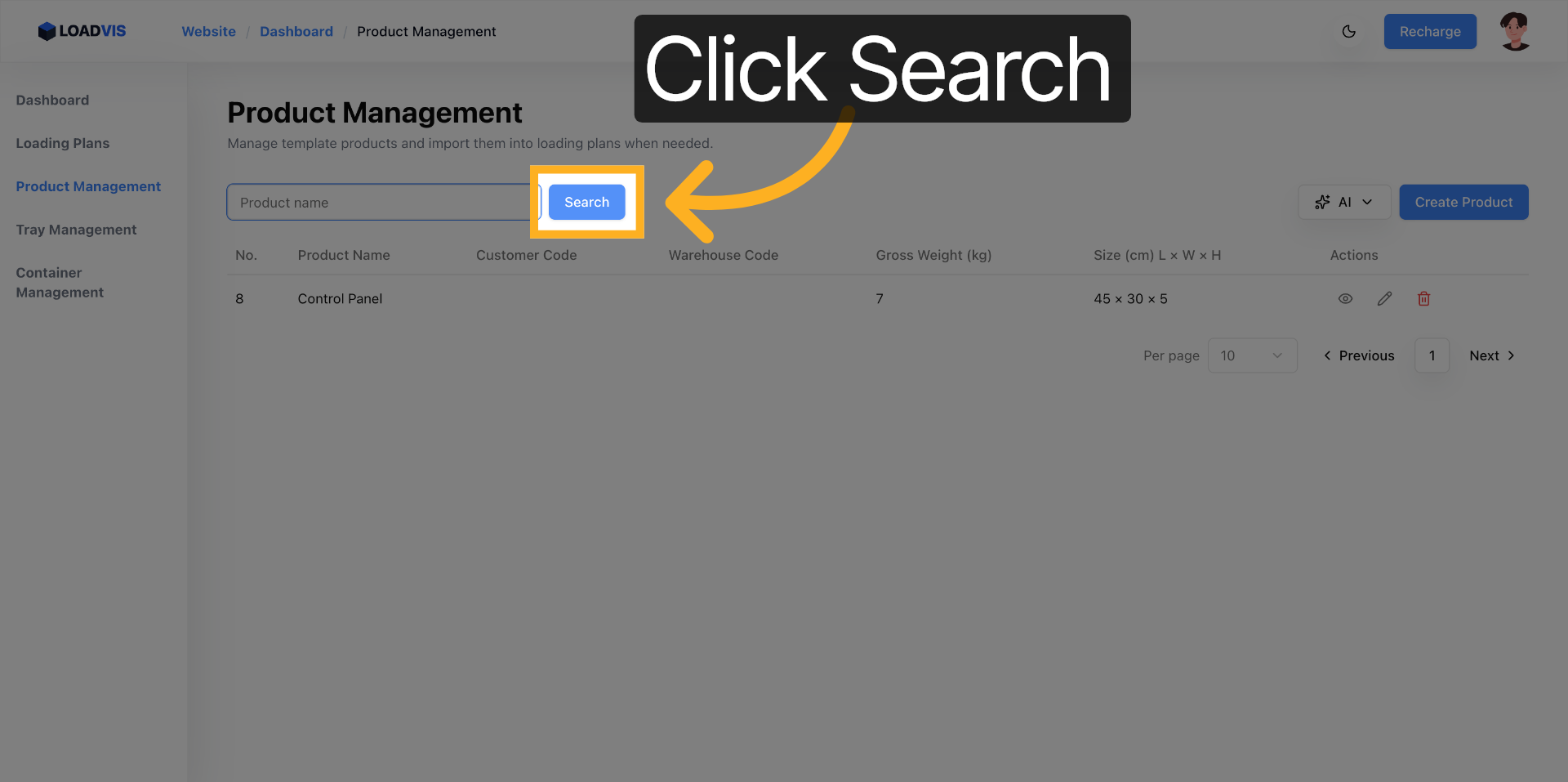

Prior to initiating the final calculation sequence, you conduct a thorough manual audit by actively utilizing the integrated list search functionality. Performing comprehensive fuzzy matching operations against specific inventory keywords enables you to systematically sweep the master registry for legacy records that still dangerously retain outdated pre-pandemic specification sheets.

Corrupted input parameters do not merely generate suboptimal routing outputs. They actively produce hazardous transport configurations that fail under real-world stress.

Wrong Approach Versus Reliable Workflow Execution

Here is exactly where the operational workflow fractures into two completely separate execution paths.

On the highly brittle side of the equation. You paste an entirely unrefined supplier email notification directly into the AI recognition input field. You proceed to blindly accept every automatically parsed default value without cross-referencing physical engineering samples or dimensional drawings. You deliberately bypass the pallet constraint and structural weight toggles entirely. You operate under the deeply flawed assumption that external manufacturing vendors consistently maintain identical metric unit standards across all procurement documentation. You trigger the optimization calculation sequence immediately. The resulting shipping manifest appears exceptionally dense on the computer monitor. It physically fails to pass the loading bay entrance gates.

On the hardened pipeline side of the divide. You systematically strip the source context of all conversational linguistic filler before you carefully isolate the core technical specification data. You execute the AI parsing routine against this thoroughly cleaned dataset. You manually validate the extracted dimensional parameters against documented warehouse standard operating procedures or a direct physical tape measurement. You explicitly enable the structural load-bearing flags and pallet handling requirements. You utilize the registry search mechanism to sweep for duplicate inventory identifiers or permanently deprecated product codes. Only after you completely finish this manual reconciliation phase do you proceed to safely initiate the optimization calculation engine. The generated loading plan successfully withstands actual operational stress testing.

Tool Boundaries Versus Mandatory Manual Confirmation

We must establish a completely unambiguous demarcation line between automated software capabilities and required human oversight responsibilities.

The underlying software architecture absolutely excels at performing batch structuring of chaotic unstructured text streams, carrying out rigorous syntax validation on all extracted numerical parameters, actively mapping complex operational constraints into pure mathematical logic, and engaging in the consistent data persistence of verified records across multiple user sessions. The system will tirelessly format thousands of disparate inventory entries into a uniform computational schema. It will not, however, engage in the unit alignment conversion process when your legacy procurement contracts arbitrarily mix imperial pounds with metric kilograms or routinely confuse standard cubic feet with cubic meter measurements. It completely cannot evaluate the real-world operational feasibility of manual pallet jacking equipment within a specific regional warehouse floor footprint. It entirely lacks the contextual awareness necessary to accurately distinguish between gross shipping mass and net payload weight when the originating supplier deliberately omits primary packaging material data from their submitted documentation. It possesses zero practical understanding of physical stack height tolerance dictated by localized atmospheric humidity conditions or strict forklift mast clearance safety regulations.

You must carry out the mandatory confirmation procedures for these specific ground-level operational realities. You need to exercise professional engineering judgment regarding the precise alignment between abstract digital database fields and concrete on-site execution protocols. The platform functions strictly as a mathematical constraint resolution engine. It absolutely does not operate as a comprehensive physical environment simulator.

Summary

Spatial optimization algorithms execute their routines strictly within the rigid numerical boundaries of the input data you deliberately feed into their processing layers. If the foundational master data layer contains highly speculative measurements or completely unverified physical tolerances, the computational output layer will inevitably generate mathematically flawless but operationally disastrous transport manifests. Treat thorough data verification as a non-negotiable engineering validation phase rather than a simple bureaucratic administrative checkbox. You must actively verify physical dimensions against documented engineering tolerances. Cross-check cumulative weight distribution profiles against certified warehouse dock scale readings. Audit historical inventory records completely before you ever commit to final transportation routing schedules. When the master dataset accurately reflects established ground truth, the solver performs precisely as its architectural design dictates. When the dataset diverges from reality, no amount of advanced spatial computation can physically prevent a compromised cargo load from collapsing at the initial staging area.